🦞 OpenClaw Unpacked

Building Persistent, Action-Oriented AI for the Real World

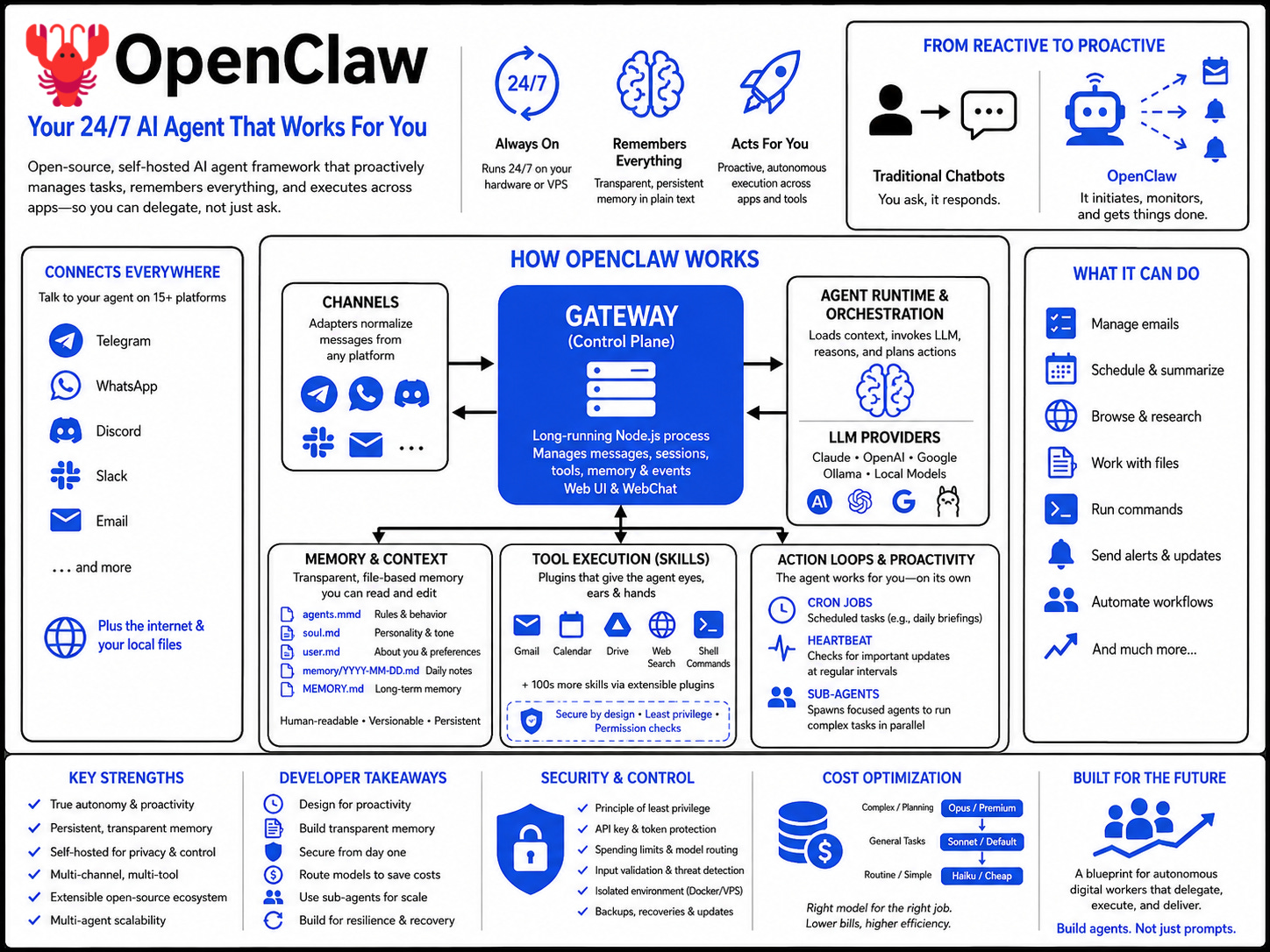

The promise of AI has long extended beyond mere conversation to truly autonomous systems that act on our behalf. Yet, most of today’s widely adopted AI tools remain reactive. You go to them, type a prompt, and they respond. They’re powerful, but they largely lack the proactive agency, persistent memory, and integrated execution capabilities needed to function as genuine digital assistants. This gap limits their utility to transient tasks, preventing them from becoming integral, always-on components of our personal and professional workflows.

The vision of a “24/7 Jarvis” that proactively manages tasks, monitors systems, and orchestrates actions across applications has remained elusive. Building such an agent necessitates solving complex challenges: secure integration with personal data, continuous operation, robust tool use, and sophisticated memory management that doesn’t “forget” context after each interaction.

OpenClaw emerges as a compelling answer to this challenge, demonstrating a practical architecture for deploying persistent, autonomous AI agents. It represents a significant step towards computer-use agents that can truly work for you, transforming our interactions with digital environments from command-and-response to delegation and proactive execution.

What Is OpenClaw?

OpenClaw is an open-source, self-hosted AI agent framework designed to be a proactive personal assistant. Unlike conventional AI chatbots like ChatGPT or Claude’s web interface, which you “go to” for help, OpenClaw runs 24/7 on your own hardware (or a VPS) and comes to you. It connects large language models (LLMs) with your local files, messaging applications, and the broader internet, enabling the AI to execute real-world tasks.

Initially known as Clawdbot and later Moltbot, it was developed by Peter Steinberger and has gained significant traction for its “local-first” approach. OpenClaw itself is not an LLM; instead, it acts as an orchestration layer, connecting to various AI models via API keys and leveraging a “skills” system to perform actions. This means the intelligence comes from external LLM providers (e.g., Anthropic, OpenAI, Google, or local models via Olama), while OpenClaw provides the execution environment, memory, and multi-channel communication gateway. It effectively gives LLMs “eyes, ears, and hands” to interact with your digital world.

Its core distinction lies in its ability to operate autonomously and persistently. It integrates with common messaging platforms like Telegram, WhatsApp, Discord, and Slack, serving as its primary user interface. Through these channels, you can instruct your OpenClaw agent to perform actions such as running shell commands, managing emails, scheduling meetings, browsing the web, and interacting with various Google Workspace applications.

How OpenClaw Works

OpenClaw’s architecture is centered around a single, long-running Node.js process called the Gateway. This Gateway functions as the control plane, managing all message ingress/egress, session states, tool dispatch, and events. It’s the “operating system” for your AI agent, providing the execution environment for the LLM’s intelligence.

Here’s a breakdown of its key architectural components and workflow:

Gateway (Control Plane): The central hub that runs continuously on your machine or VPS. It listens for inbound messages from connected communication channels and routes them to the appropriate agent session. It also serves a web-based Control UI and WebChat interface for direct interaction and debugging.

Channels: These are adapters that normalize messages from various platforms (Telegram, WhatsApp, Discord, Slack, etc.) into a consistent format for the agent. OpenClaw supports over 15 messaging platforms and offers seamless multi-channel interaction.

Agent Runtime & Orchestration: When a message arrives, the Gateway routes it to an agent session. This session loads the relevant context (system prompt, history, attachments) and invokes the configured LLM. The LLM then reasons about the task, decides on actions, and streams responses, potentially requesting the use of tools or skills.

Memory and Context Handling: A cornerstone of OpenClaw is its persistent, transparent memory system. Unlike many AI systems that rely on opaque vector databases, OpenClaw stores all configuration data and interaction history locally as human-readable Markdown files. These files include:

agents.mmd: Defines the general rules and behavior of your bot.soul.md: Captures the bot’s personality, tone, and stable instructions.user.md: Stores information about you; your name, time zone, preferences, and work context.memory/YYYY-MM-DD.md(Daily Notes): Running context and observations.MEMORY.md: Curated long-term memory for durable facts, preferences, and decisions. This file-based approach allows developers to inspect, edit, and even version control the agent’s “brain” directly, ensuring transparency and enabling the bot to learn and improve over time. Compaction memory flush and session memory enable context to carry between conversations and prevent loss during compression.

Tool Execution (Skills): “Skills” are essentially plugins that extend the agent’s capabilities. These can range from integrating with Google Workspace (Gmail, Calendar, Drive) to web search (e.g., Brave Search API) and executing shell commands. Skills are stored as directories containing

SKILL.mdwith metadata and instructions for usage.Action Loops & Proactive Behavior:

Cron Jobs: These are scheduled tasks that your bot performs automatically at set times, such as daily morning briefings summarizing emails and calendar events.

Heartbeat: The agent “wakes up” at configurable intervals (e.g., every 30 minutes) to proactively check for things that need attention, like urgent emails or upcoming calendar events.

Sub-Agents: For complex tasks or parallel processing, OpenClaw can spin up multiple “sub-agents.” These are temporary, task-scoped agents that work on different parts of a job simultaneously, reporting back to the main agent upon completion. Each sub-agent runs in its own isolated session and can be configured with specific tools and even cheaper LLMs for cost efficiency.

What The Demos Reveal

The practical demonstrations of OpenClaw highlight its unique blend of autonomy, extensibility, and user control, while also revealing key operational considerations:

Proactive Automation as a Core Capability: The ability to configure daily briefings (e.g., weather, calendar, urgent emails) via a cron job and have the agent proactively monitor for urgent events using a heartbeat fundamentally shifts the user experience from reactive querying to delegated management. This proactive nature is a major differentiator from standard chatbots.

The Power of Plaintext Memory: The fact that the bot’s “brain” (

soul.md,user.md,agents.mmd,memory.md) consists of editable Markdown files is a powerful developer takeaway. This transparency allows for direct, human-in-the-loop refinement of the agent’s personality, rules, and knowledge, eliminating “black box” behavior. The bot can even edit these files itself, showcasing self-modification.Security is Paramount (and Self-Managed): The demo stresses the critical importance of security. From saving the gateway token as a master key to the bot hardening its own setup by implementing security documentation, it’s clear that the power of OpenClaw demands diligent guardrails. The incident where a bot detected hidden malicious text trying to trick it into reading a fake file system underscores the real-world risks and the need for the “principle of least privilege”. Defaulting to allow insecure off to true for browser access, while convenient for setup, is flagged as a risk for public Wi-Fi, necessitating VPNs or reverse proxies for production setups.

Cost Management is an Engineering Problem: The emphasis on LLM tiering and model routing is a crucial practical insight. Running expensive models for every routine task can lead to “overnight disaster stories”. The demonstration of configuring routing rules (e.g., using Claude Sonnet by default, Haiku for routine tasks, Opus for planning) highlights the need for a thoughtful cost optimization strategy. The potential for silent failures due to exhausted API credits also reinforces the need for fallback models, including free options.

Tedious Setup (but worth it): The Google Workspace OAUTH setup, while a common pain point for any deep integration, is candidly described as “the most annoying part of the entire setup”. This signals that advanced capabilities often come with initial configuration overhead, a reality for agent builders.

Sub-Agents for Scalability: The multi-agent research task (comparing n8n, Zapier, make.com) demonstrates how complex projects can be broken down and parallelized. The bot’s ability to spawn and manage these sub-agents, each potentially with optimized models, is a powerful architectural pattern for tackling larger workloads efficiently. The initial failure to perform web search until a Brave Search API key was provided also illustrates the clear dependency of agent capabilities on available tools.

Resilience and Recovery: OpenClaw’s frequent updates and the various recovery options (stopping processes, Docker stop, revoking API keys, restoring snapshots) indicate a system designed for ongoing operation in potentially unpredictable environments. The importance of backing up configurations before updates is strongly advised.

Why This Matters

OpenClaw’s approach has profound implications for AI agents, automation, and the broader digital ecosystem:

True Agency and Proactivity: It moves beyond the “copilot” paradigm to genuine “agents” that can initiate actions, monitor conditions, and operate autonomously. This is a fundamental shift in how we interact with software, enabling proactive workflows rather than merely reactive assistance.

Personalization and Control: By being self-hosted and local-first, OpenClaw gives users unprecedented control over their AI assistant’s brain, data, and security. This addresses growing concerns about data privacy and vendor lock-in, empowering users to truly “own” their AI. The transparency of markdown-based memory fosters trust and explainability.

Democratization of Advanced AI Workflows: OpenClaw lowers the barrier to entry for building sophisticated, multi-tool AI automations without needing to write code. This opens up advanced agent capabilities to a wider audience of technically curious users, founders, and small businesses for automating tasks like lead generation, customer support, and personal productivity.

Open-Source Ecosystem Development: As an open-source project (MIT licensed), OpenClaw fosters community contributions to skills, integrations, and architectural improvements. This collaborative model accelerates innovation and strengthens the agent’s capabilities beyond what a single commercial entity could achieve.

Blueprint for Agent Architecture: OpenClaw provides a concrete, working example of an agent architecture that effectively integrates LLMs with external tools, memory, and scheduled execution. Developers can learn from its design patterns for handling context, managing state, and orchestrating complex tasks, which is crucial for the evolving field of agent engineering.

Catalyst for Digital Workers: The ability to run multiple sub-agents simultaneously foreshadows a future where complex tasks are broken down and delegated to specialized “digital workers” within an organization, leading to more efficient and scalable automation across businesses.

Practical Takeaways For Builders

OpenClaw offers invaluable lessons and design patterns for anyone building AI agents:

Prioritize Persistent, Transparent Memory: The file-based Markdown memory system is a standout feature. Implement memory that is not only persistent across sessions but also human-readable and editable. This builds trust, aids debugging, and allows for direct intervention to correct or refine the agent’s knowledge. Consider hybrid approaches that combine transparent file storage with vector indexing for efficient retrieval.

Architect for Proactivity (Heartbeat & Cron Jobs): Design agents that aren’t purely reactive. Incorporate scheduled tasks (cron jobs) and continuous monitoring loops (heartbeats) to enable agents to act on their own initiative. This moves them from mere assistants to autonomous partners.

Implement Robust Security from Day One: Treat agent security as a critical architectural concern, not an afterthought. Employ the principle of least privilege for tool access, implement spending limits for API usage, and always validate external inputs. The self-hardening demonstration is a powerful example of an agent enforcing its own security posture. Isolate your agent environment (e.g., Docker, VPS) to contain potential breaches.

Embrace Model Routing for Cost Efficiency: Recognize that not all tasks require the most expensive LLM. Implement intelligent model routing to dynamically select the appropriate (and cheapest) model based on the complexity and type of task. This is a crucial cost optimization strategy that can dramatically reduce API bills.

Leverage Multi-Agent Orchestration: For complex problems, break them down into smaller, parallelizable tasks that can be handled by specialized sub-agents. Design an orchestration layer that delegates work, collects results, and synthesizes outcomes. This improves efficiency, scalability, and context management by scoping each agent’s focus.

Modular Tooling (Skills): Develop a flexible “skills” or “plugin” system that allows easy extension of agent capabilities. This promotes a rich ecosystem and enables the agent to adapt to new integrations without core code changes. Always include security checks (like VirusTotal scans) for third-party skills.

Build for Resilience and Recoverability: Frequent updates are common in the fast-evolving AI landscape. Incorporate mechanisms for easy updates, reliable backups, and clear recovery procedures (e.g., stopping processes, API key revocation, snapshot restores) to ensure operational continuity.

Final Thoughts

OpenClaw is more than just another AI tool; it’s a living testament to the evolution of AI agents towards true autonomy and integration. By providing a transparent, self-hosted, and extensible framework, it offers a tangible glimpse into a future where AI assistants are not just smart conversationalists, but indispensable digital operatives. The lessons from OpenClaw’s architecture, from its file-based memory to its proactive scheduling and multi-agent capabilities, will undoubtedly inform the next generation of AI systems. For builders, this is a call to move beyond prompt engineering to architecting robust, secure, and truly autonomous agentic workflows. The journey from reactive chatbots to proactive digital workers has only just begun, and OpenClaw is showing us a clear path forward.